The QA Review Playbook

03 Nov

Table of Contents

ToggleEverything you need to know about managing quality assurance reviews on different types of content. This playbook covers everything from front-end testing to stakeholder feedback.

Introduction – Why QA

The Quality Assurance (QA) Review process measures and reviews the content/product quality. The process is designed to encourage and support high-quality reviews and create a well-reviewed final product.

This playbook includes a Review Quality Checklist, a QA review tool, supportive strategies, and workflows to collect and manage impactful feedback during a QA review. Without this, you risk reaching the end of your test period with a mountain of ambiguous data and no clear plan for how to best use it.

Is This Resource For You?

This whitepaper is primarily intended for individuals involved in the development of digital content. This could be images, PDFs, videos, websites, or SCORM/eLearning courses. This typically includes QA reviewers, product managers, beta testers, and others tasked with executing a QA review in preparation for their product launch.

In this playbook, you’ll learn:

- How to manage cross-functional QA reviews

- Key roles and workflows

- How to track bugs and feedback effectively

- Tools to streamline website QA (zipBoard, Jira, etc.)

The QA Review Checklist

The following checklist was developed as a practical tool to assist reviewers to review the content within a certain pre-set quality and feedback criteria. A QA comment must meet these criteria.

#Note that each organization can have its own quality and feedback criteria that vary based on region, industry, demographics, etc. This checklist is provided as a general one.

First Criteria – Robustness

Criterion: Robustness

Definition: The review is thorough, complete, and credible.

Interpretation:

- The review contains detailed info on each issue, including meaningful and clearly expressed descriptions of what is expected.

- Comments align with the given purpose of the QA review.

- The review addresses all applicable test criteria and does not include information that is not relevant to the test.

- All comments on data/figures are factually correct. Absence of confrontational questions.

- The comment contains enough context for the assignee to understand.

A Note About the Criteria Given in this Resource

zipBoard is designed to offer a complete QA review solution for different industries. Given that different industries have their own criteria, we’ve gone out and created these acceptability criteria based on expert opinions, which are influenced by the scientific knowledge and/or experience of individual users.

Second Criteria – Appropriateness

Criterion: Appropriateness

Definition: Review comments are fair, understandable, confidential, and respectful.

Interpretation:

- The review respects the Conflict of Interest and Confidentiality Policy.

- Absence of comments that suggest bias against the content creator due to their demographics and background.

- The review is original and written in clear and understandable language.

- Absence of comments that are arrogant or dismissive.

Final Criteria – Utility

Criterion: Utility

Definition: The review provides feedback that addresses the needs of org, clients, or end users.

Interpretation:

- Review comments are constructive and may help the creator/dev to improve their future versions and/or advance their research.

- The review contains information that allows other reviewers to understand and collaborate on the reviewer’s comment(s).

- If the comment is to be assigned as a task internally, there must be enough detail for the assignee to take it on.

What Psychology Says About QA Review

QA reviewers need to understand the aim of the content before the review to provide high-quality feedback. In a typical digital content QA review, the average Joe will rely on various team communication channels, emails, spreadsheets, phone calls, and zoom meetings to provide honest feedback.

Couple that with the fact that you now have a ton of ambiguous feedback lying everywhere means you’ve just pre-booked yourself a few more zoom calls. You can significantly streamline this entire process (and thus the amount of contextual feedback you collect) by understanding the QA psychology and making use of a QA review tool created just for this. A skilled QA reviewer with the right tool and set of information is capable of identifying the minute issues, creating the environment for high user acceptance, and streamlining the feedback process to gather targeted high-quality feedback.

We’ve listed four such important psychological tips we’ve identified along the way to help with QA reviews.

#1 Focus on People’s Motivation

What drives people’s behavior? Freud said it is unresolved conflicts from childhood, Maslow talked about the hierarchy of needs, Frankl said that it’s the meaning that drives us and James said it’s learned behavioural patterns.

The reviewer should consider what drives the final user. Being able to put yourself in someone else’s shoes and view the world from their point of view is frequently quite difficult, but it is also very valuable. Testing professionals must, in our experience, do that. Consider the issues faced by users or the deliverable wanted by the client as your own for a moment. Consider yourself as someone who needs that content for a certain purpose. It aids in your comprehension of such behaviour.

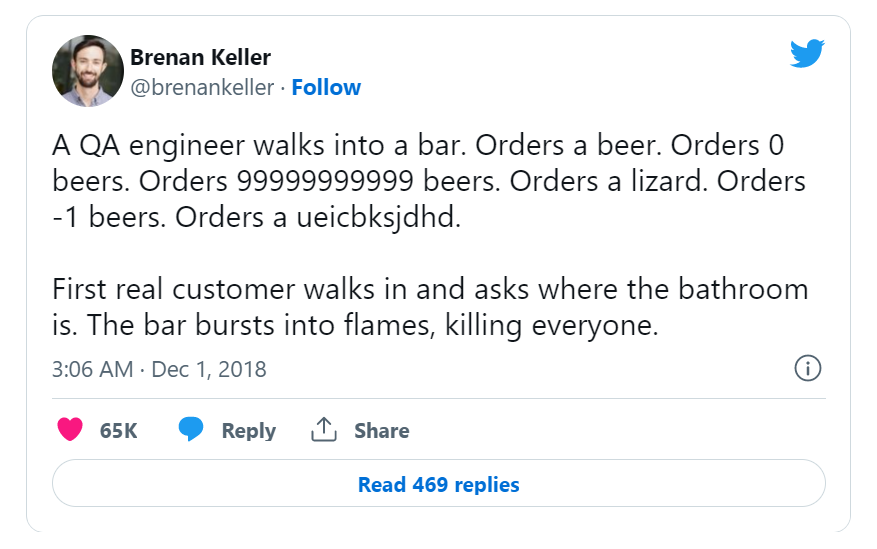

And we’d love to demonstrate why it’s important with this lovely tweet from Brenan below.

Without understanding the end user’s POV, we run the danger of our product or content not being as excellent in our users’ eyes if we try to concentrate only on creating something that is “perfect” in our own eyes.

There are a few simple tricks to reducing friction and maximizing the QA reviewer’s effort:

Make Sure the Reviewer Has All the Info

Your testers shouldn’t need to assume information about the end user or what exactly the QA review is for. Say, you have a reviewer providing feedback on an eLearning course. Without knowing whom the course is tailored for, what their priorities are, and how they want the course to be presented, you wouldn’t be able to provide proper feedback on it.

Create a Single Line of Contact

When possible, you should try to keep the contact within the same review environment. Using multiple sources of communication for the same purpose can hinder future reviews of the content.

Following these specific best practices greatly increases both the level and quality of your QA feedback.

#2 Consider Carefully What People Perceive

Step outside of yourself and try to shift your focus to the other person. The one who will be taking on the task of resolving the issues you’ve outlined. Is the feedback you gave enough to take it forward? Does the assignee know what should be done instead or how to go about it? Think about it. Anyone can give feedback by saying, “Change the colour.” What colour though?

Think about it from the reader’s perspective. This almost certainly will raise another question, “What colour?”. And what thought process should go behind deciding the colour? Better feedback might be, “The colour doesn’t match the text. Something a bit darker shade should do.”

Shift the focus from the content to the people. This should be the end user always for a QA reviewer. But note that your comments will only land on someone working on the piece and not the client. So, tailor your feedback accordingly.

Here is a simple trick to reducing friction and maximizing the QA reviewer’s effort:

Always ensure that your feedbacks are in-context

Explaining something visual using a conventional method of review takes up unnecessary time and effort from both the reviewer and the content owner/developer. There are QA review tools that specifically help with this.

#3 Acknowledge and Question Your Assumptions

It is hard to avoid making assumptions; they are a part of all of us. Even if you try to get rid of them, you might just be deluding yourself into believing that you have.

But that does not imply that you have no control over them. The best course of action is to admit having them. And then clarify the doubts with the content owner, instead of running away with your assumption. This will eventually lead to a better QA review and end product.

The big problem with assuming is that your experience is totally different from the experiences of others.

Here is a simple trick to reducing friction and maximizing the QA reviewer’s effort:

Use a Centralized QA Review and Feedback Platform

A centralized QA platform not only makes it easier to locate content pieces, their versions, and the feedback but it also makes QA reviewers more likely to clarify their doubts when they arise. As they leave a trail of activity along the way, this will benefit future reviewers.

This will greatly increase both the level and quality of your QA feedback.

#4 There is No Stupid Feedback, Only Poorly Constructed

You can tell someone prioritizes efficiency if they grumble about everyone being late to meetings. You can know someone cares a lot about quality if they complain about the number of bugs in a web page experience. That implies that you can tell a person holds a certain value highly when they express concern about a problem.

However, how we take these comments can be different. On the one hand, the QA reviewer might have added the comment to help with the product, on the other hand, it might rub off the one who develops it the wrong way.

Ongoing QA Review Cycle

A large part of the feedback you’ll collect during your review will be ongoing reviews. As each reviewer tests your content/product, they will have issues or ideas about it. They might determine that issues are detrimental to the goal while others are unnecessary. Given the organic nature of this feedback, you’ll need pre-determined processes in place to collect, triage, analyze, and prioritize this feedback.

That way, as you create newer versions of the content, each time taking the reviewer’s feedback into account you’ll begin to amass a healthy amount of usable, high-quality reviews from the QA to inform your imminent content decisions.

QA Review Cycle Objectives

This cycle for quality assurance consists of four steps: Plan, Do, Check, and Act.

PLAN

Organizations should plan, set process-related goals, and identify the processes needed to create a high-quality final product.

DO

Testing, and implementation of modifications.

CHECK

Monitoring of modification, and evaluation of whether it achieves the .specified goals

ACT

Re-implement the modifications as prescribed in the previous step.

Keep in Mind

Since all of these steps happen in a loop, there might seem to be some overlap in the QA review process. However, we shouldn’t ignore any of these steps. Once the team is aware of these steps and their goals, there will be less friction and confusion among them.

QA Review for Different Content

Now let’s touch on the different types of content you can review, using a QA review tool. For this playbook, we make use of zipBoard.

Web Dev QA

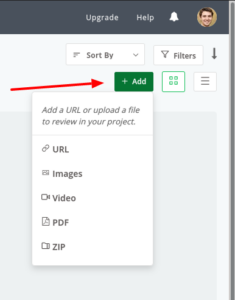

The first step is to add your content to zipBoard. In this case a URL. Or if you’ve created a mockup of it, you can also upload it as a PDF or an image.

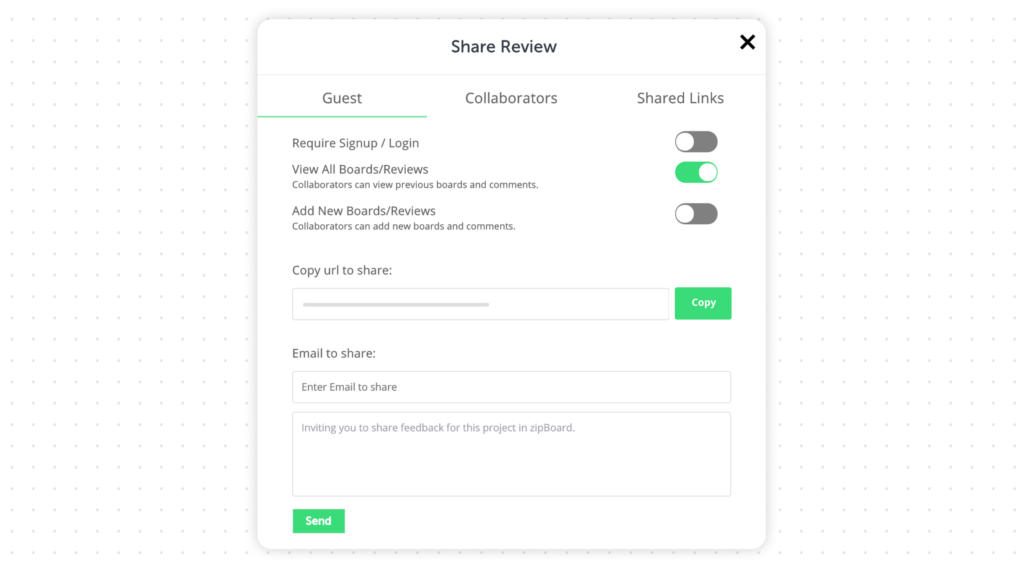

Once uploaded, you can share the file with your QA reviewers or invite them to the project.

#Note that you can add unlimited collaborators in zipBoard. If you do not want to give them access to the project, you can toggle those options while sharing.

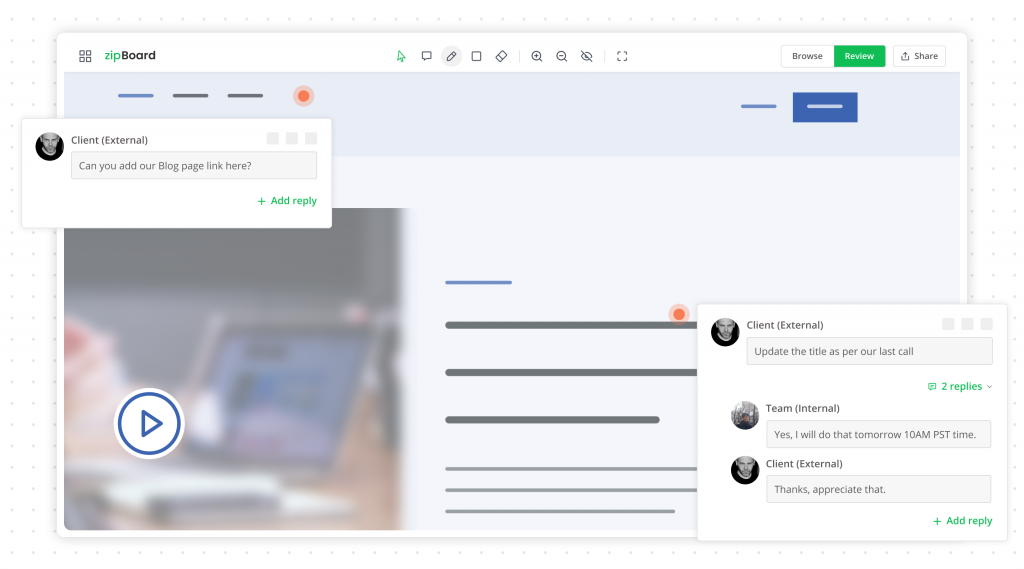

Then the QA reviewers can easily add and collaborate on the webpage. All their reviews will be consolidated for the team to view.

eLearning QA

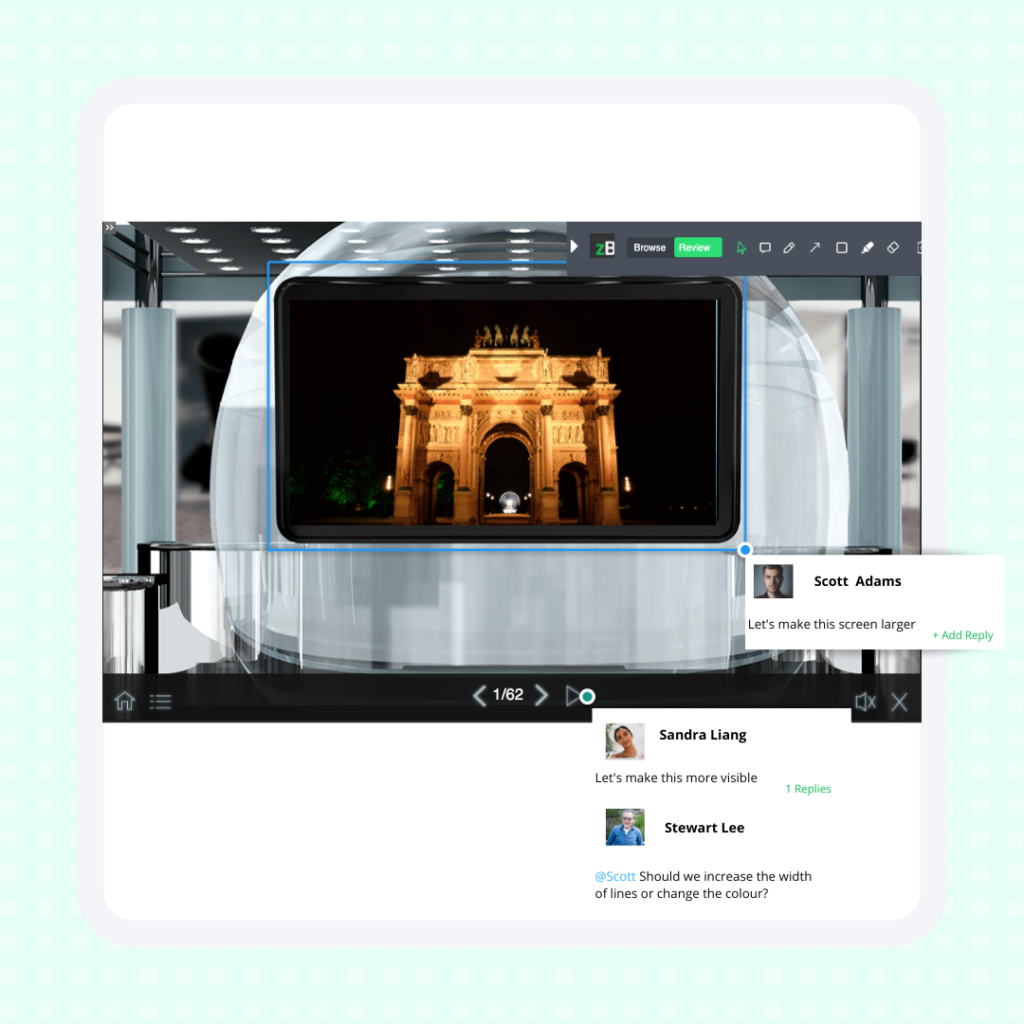

There are generally three ways eLearning content is developed. Depending on your case, you can add them accordingly to zipBoard.

- SCORM course – ZIP

- In-house dev tool – URL/API integration

- Authoring tools – URL/API integration

The QA reviewers can then start reviewing the eLearning content.

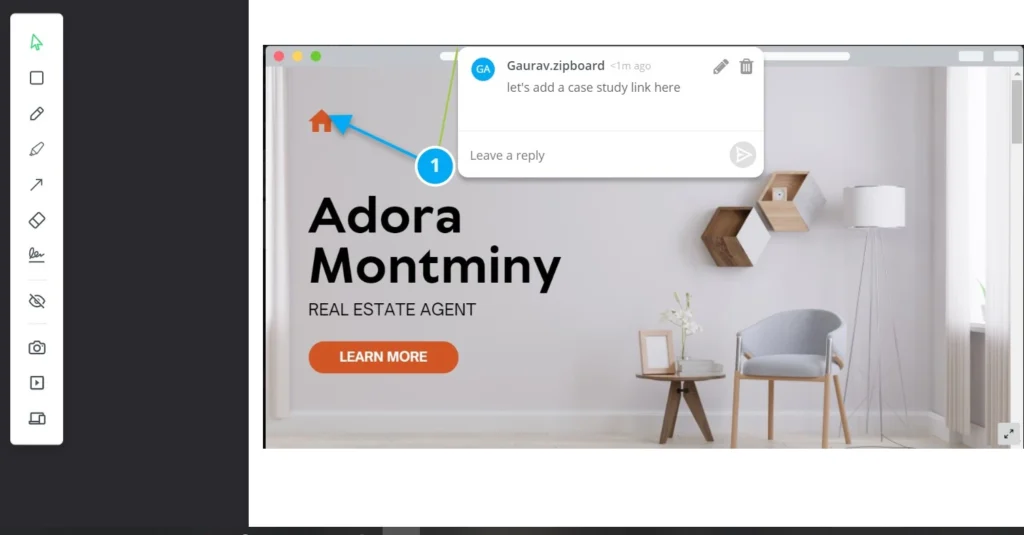

Creative Design QA

Creative designs or images are an integral part of most marketing and design teams. You can add images directly to zipBoard.

The QA reviewers can then start reviewing the images. In the image below you can see the arrow annotation has been used to point out exactly where the reviewer wants the link to the placed. No context is lost.

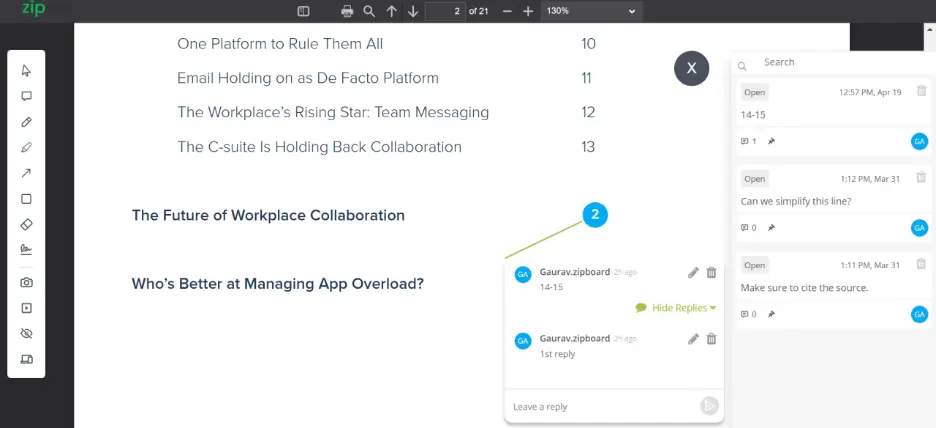

PDF Document QA

Documents have become a staple of most modern organizations. From company documents to construction submittals and technical documents, PDFs have started to rule the world. However, many QA reviewers often find it hard to provide contextual feedback on PDFs. There can be 100s of pages, and 1000s of lines.

Using a QA review tool like zipBoard however, makes it “easy” to review and add contextual feedback to PDFS. Simply add the document as a PDF and get reviewed.

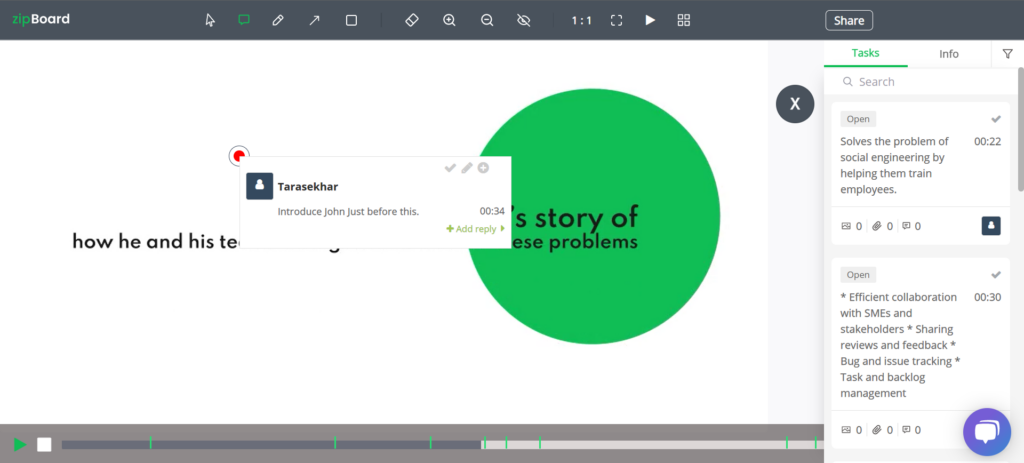

Video QA

One of the hardest content types to review is video. With the element of moving frames, letting the user know which timestamp the feedback is for becomes extra challenging. Going the conventional method would require you to take multiple screenshots, annotate on a third-party tool and share with reviewers at best. Or share an hour-long screen-share meeting with the video dev for a 20 min video.

Here, we’re gonna add the video to zipBoard. One added, the QA reviewer can start their review in no time and provide time-stamped feedback.

#Note the small lines above? They are the timestamps that you can click to navigate directly to the comment.

See how zipBoard streamlines QA reviews and bug tracking 👇

Collating Feedback and Resolving Issues

Once the QA reviewers give their feedback, they’re all collated on a centralized dashboard for the content owners and other collaborators to look at. Here, the feedback can be assigned as tasks to the collaborators working on it.

Along with this, other details such as the description, technical details for web pages, deadline, phases, etc. are included to enrich the task and make it easier to be solved.

The Long-Term Value of Good QA Review Processes

Building an efficient and effective QA review process and sticking to a cycle, not only creates high-quality content for the audience but also ensures that the entire team behind it is well-managed. Some of the key benefits are:

- Empowering content creators

- Enhancing your management style

- Aligning the creator with the company goals

- Making your clients happier

- Staying up to date

- Efficient monitoring of KPI and continuous optimization

Conclusion

Adding more resources won’t solve a quality issue without a fundamental QA review strategy. It will make a huge difference in your results if you develop a review strategy with the product owner, your development team, and other QA. No matter what kind of method you use, whether agile, scrum, or more traditional, a dedicated QA review tool will make your job that much easier.

As you begin implementing these workflows and steps, you should see benefits in the way your team works, as well as in the speed at which you can plan, create, review, and publish your content. Don’t forget to get your free eBook of this QA review playbook.

[Free eBook]

Download this QA Review Playbook as a PDF

Grab your free copy to learn more about QA reviews and ensure project quality in your next QA process!

DownloadAuthor’s bio:

Gaurav is a SaaS Marketer at zipBoard. While earning his degree in CSE at KIIT, Bhubaneswar, he rediscovered his inner love for creativity as he got into his first social internship. If he isn’t busy working, you can find him around his friends/family or enjoying a good football match or a passionate discussion over it, whichever works.

Related Post

Recent Posts

- Technical Document Review Workflow: Stages, Stakeholders & Approvals June 2, 2026

- What is Online Proofing? A Complete Guide to Transforming Your Review Process (With Free Checklist Template) May 11, 2026

- The 5 Best SCORM Review Tools for 2026: Why Friction-Free is Winning April 14, 2026

- The Project Manager’s Guide to Drawing Approvals and Submittal Tracking April 10, 2026

- How Construction Teams Manage Drawing Reviews (Without the Chaos) March 24, 2026

©️ Copyright 2025 zipBoard Tech. All rights reserved.